Staticman: An Alternative to Disqus for Comments on Static Sites

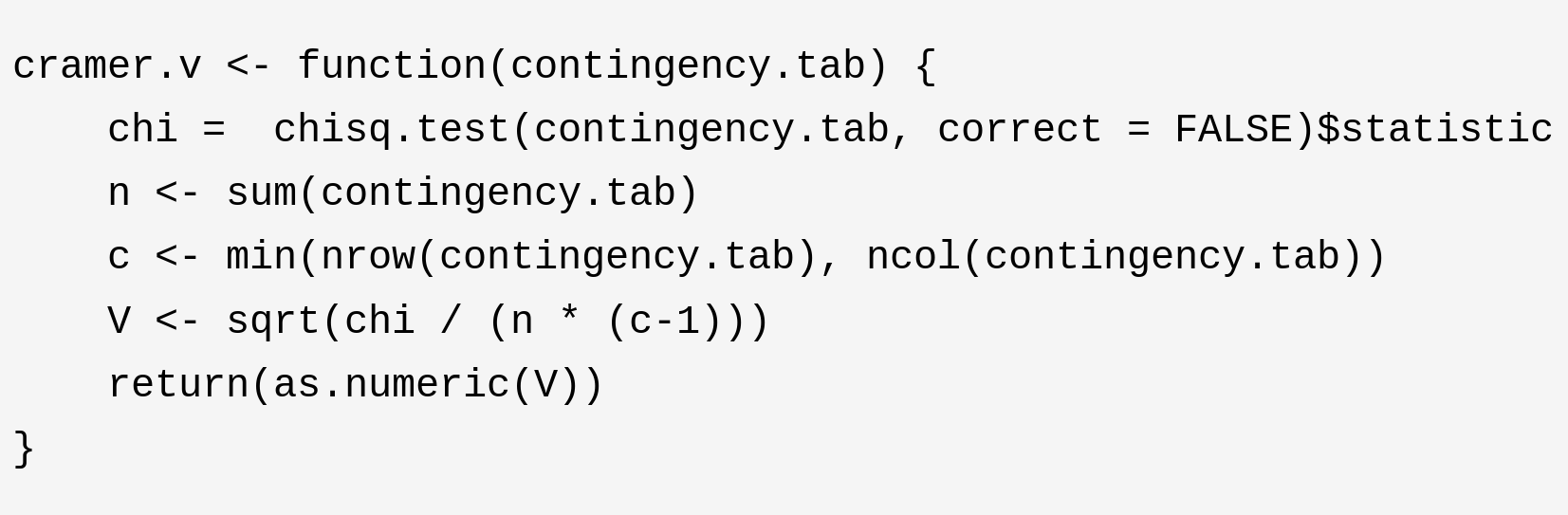

Comments are an important aspect of many websites, particularly blogs, whose success depends on their ability to create communities. However, including comments is inherently more difficult for static websites than for dynamic websites (e.g. managed through Wordpress). With Hugo, comments can be easily integrated via Disqus. The disadvantage, however, is that foreign JavaScript code needs to be executed and that the comments are not part of the page itself. Here, I will explain how comments can be integrated into a web page using Staticman.